Announcing our AI agent that models your sales reality from voice data

AI is about to run the customer journey. Sales, support, onboarding. Agents will handle it. But these agents will need something that doesn’t exist yet: a structured model of how customers actually talk, object, decide, and buy.

That backbone has to be built, and Voiceops is building it.

Human reps generate $4 trillion in annual sales every year. Yet 99% of those conversations are dark data. Unstructured, unmeasured, disconnected from every system responsible for revenue. Teams can’t see why reps convert, which objections derail deals, which messages land, or how different customer segments behave. The richest signal in the business is trapped in phone calls.

This is the bottleneck that will determine whether AI agents succeed or fail at revenue. They won’t succeed by guessing. They’ll need the products, the objections, the risk signals, the winning patterns. Structured, machine-readable, flowing into every system that touches the customer.

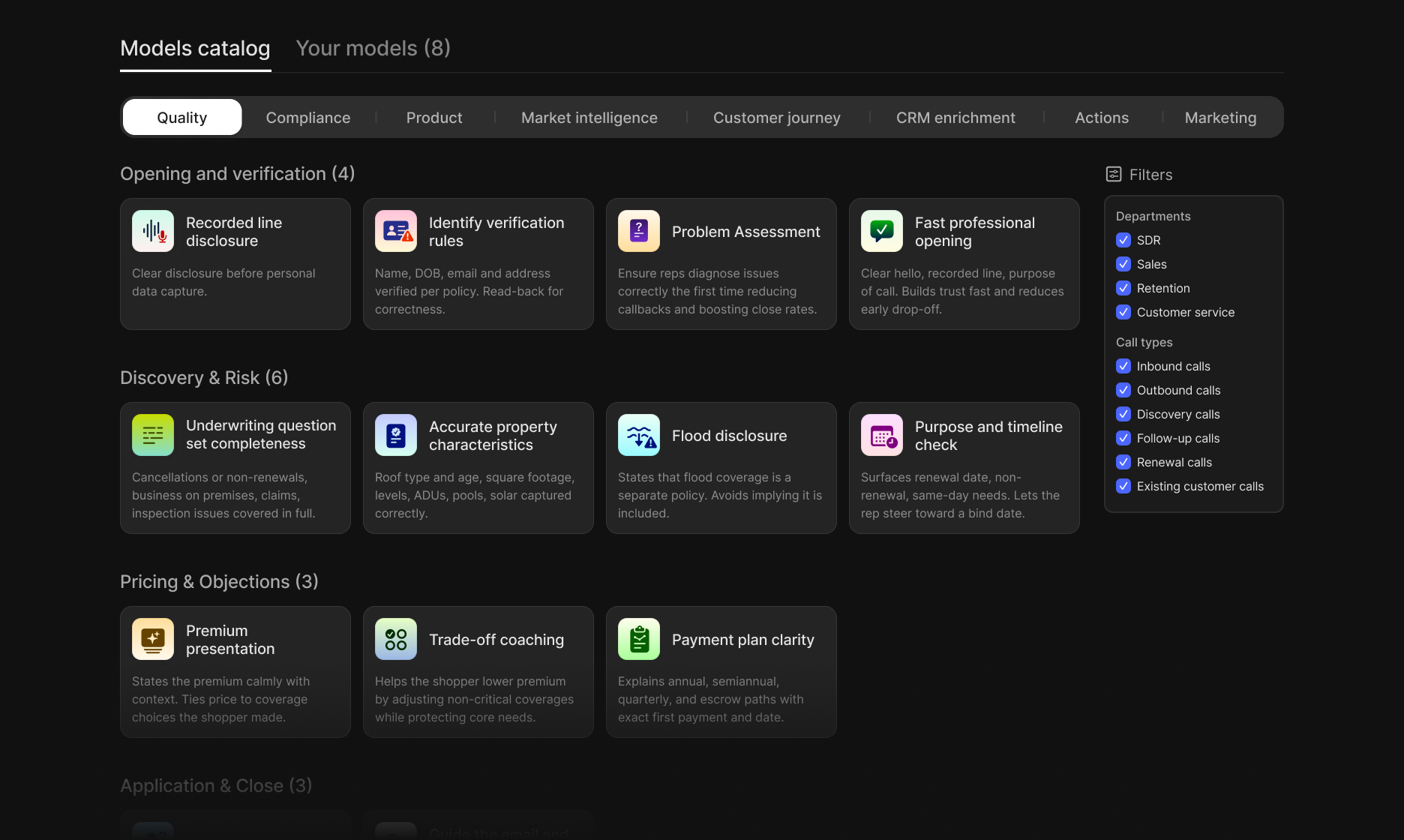

Today we’re announcing our AI agent for voice data. It scans tens of thousands of historical calls and constructs a complete model of your sales reality. Zero prompts, zero labeling, zero manual taxonomy. The agent deduces your products, identifies your risks, surfaces edge cases, and catches nuances that would take a human team weeks to discover. It does this in hours.

Take one example. We built a model to detect military affiliation for an education company. A human would spend days defining categories and discovering edge cases through trial and error. The agent figured out the schema by observing what was actually discussed in calls, defined the categories, and surfaced the ambiguous cases that needed clarification. The output was more rigorous than any human-led effort.

Once structured, voice data flows directly into CRMs, QA workflows, routing systems, and lead scoring. Revenue teams can more intelligently make decisions and act on market signals to lift conversion, drive efficiency, and improve retention.

This is the intelligence layer that modern revenue teams need to perform, and that future AI agents will depend on.

When AI agents finally sell like the best human reps, it won’t be because they were told what to do. It will be because they learned from a model of real behavior. The next decade of revenue depends on it.

Ready to build a more intelligent business on Voiceops?

Talk to our team about turning your customer conversations into the intelligence your company runs on.